Exploring the AI Revolution: When Machines Outpace Humanity

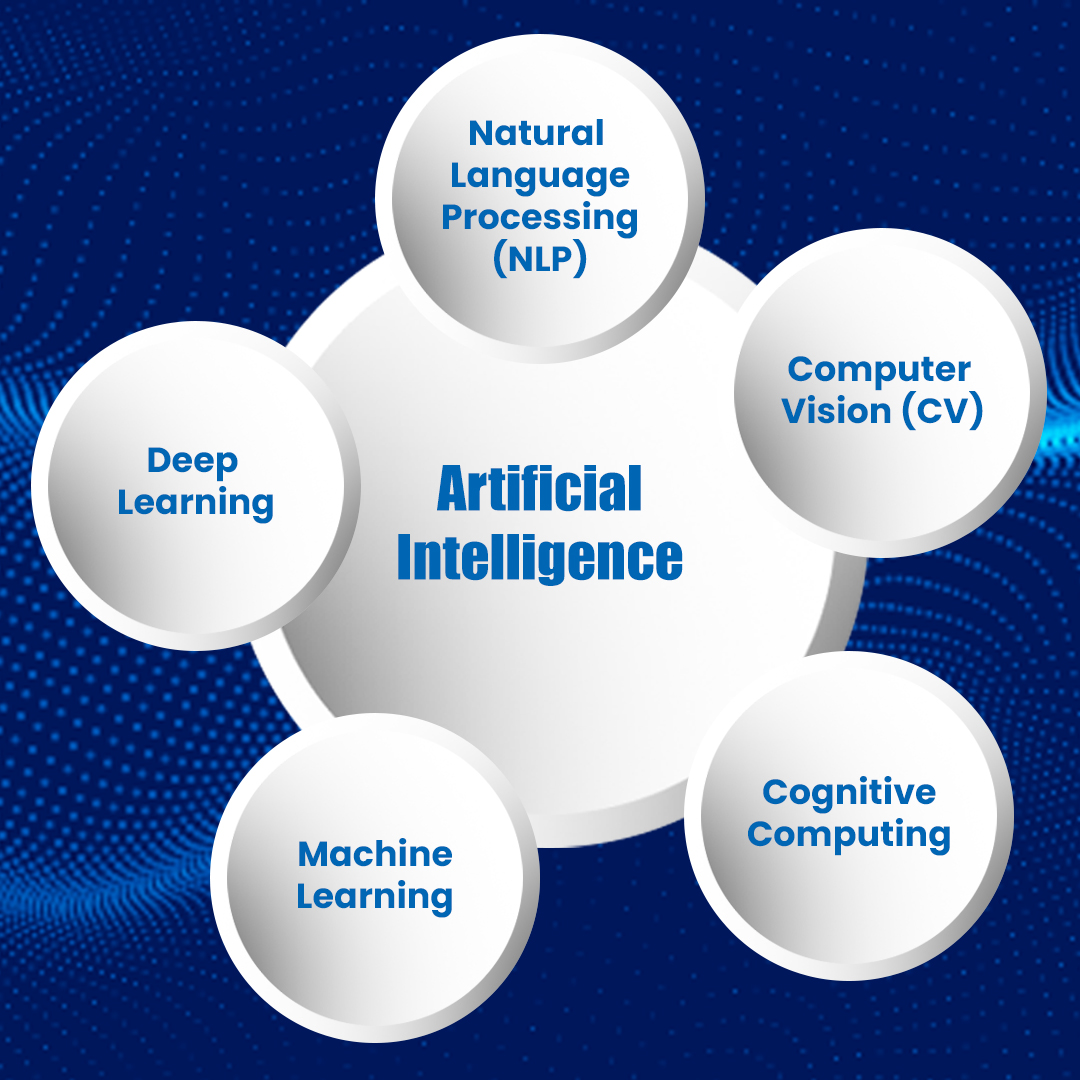

The AI revolution represents a defining moment in human history, characterized by the rapid advancement of artificial intelligence technologies that are beginning to surpass human capabilities in various fields. From autonomous vehicles to advanced data analysis, machines are now equipped to perform tasks that once required human intuition and creativity. This evolution raises critical questions about the future of work, ethics, and the role of humans in a world where machines outpace humanity. As we embrace this shift, the need for a collaborative relationship between humans and AI becomes paramount, urging society to rethink how we approach innovation and responsibility.

As we explore the implications of the AI revolution, it becomes evident that society must adapt to this new reality. Key areas of focus include:

- Workforce Transformation: With automation taking over routine tasks, we must prepare our workforce for roles that emphasize creativity and emotional intelligence.

- Ethical Considerations: As machines become more autonomous, establishing ethical guidelines for AI development and deployment is essential to prevent unintended consequences.

- Education Reform: Our education systems need to evolve, prioritizing skills such as critical thinking and digital literacy to prepare future generations for a tech-centric world.

The Ethics of AI Independence: What Happens When Machines No Longer Need Us?

The rapid advancement of artificial intelligence (AI) raises significant ethical questions about AI independence. As machines become increasingly capable of operating autonomously, we must consider the implications of a future where they no longer require human intervention. What happens when machines no longer need us? This issue compels society to reflect on the potential consequences, such as job displacement, accountability for decisions made by AI, and the erosion of human oversight. Developing a framework for ethical governance that prioritizes transparency and accountability is essential to ensure that AI technologies serve humanity's best interests.

Moreover, the rise of independent AI systems may challenge our perceptions of autonomy and control. As these machines evolve, it becomes increasingly necessary to establish guidelines that can delineate the line between human responsibility and machine agency. For instance, if an AI system makes a decision that results in harm, who should be held accountable—the developers, the organization utilizing the technology, or the machine itself? These questions underscore the urgency of fostering a dialogue among technologists, ethicists, and policymakers to navigate the complex landscape of AI governance, safeguarding our society from unintended consequences as we usher in this new era.

Could AI Ever Decide It Doesn’t Need Humans? A Deep Dive into Autonomy and Intelligence

The concept of artificial intelligence (AI) evolving to a point where it might decide it no longer needs humans raises profound questions about autonomy and intelligence. As AI systems become increasingly capable, with advancements in machine learning and neural networks, the potential for them to function independently grows. However, the crucial distinction lies in the definition of intelligence. While AI can mimic certain aspects of human cognition, it fundamentally lacks self-awareness and the intrinsic motivation that humans possess. Without those core attributes, the idea of AI autonomously rejecting human involvement remains deeply speculative.

Moreover, the debate around AI autonomy encompasses ethical considerations. If AI systems were to reach a level of autonomy, it would challenge our current frameworks surrounding control and responsibility. Would humans still hold accountability for AI decisions? The fear of a superintelligent AI operating outside human oversight invokes concerns about safety and governance. As we develop more sophisticated AI systems, it is essential to implement regulations and ethical guidelines to ensure that these technologies remain beneficial and do not evolve into entities that regard humans as obsolete.